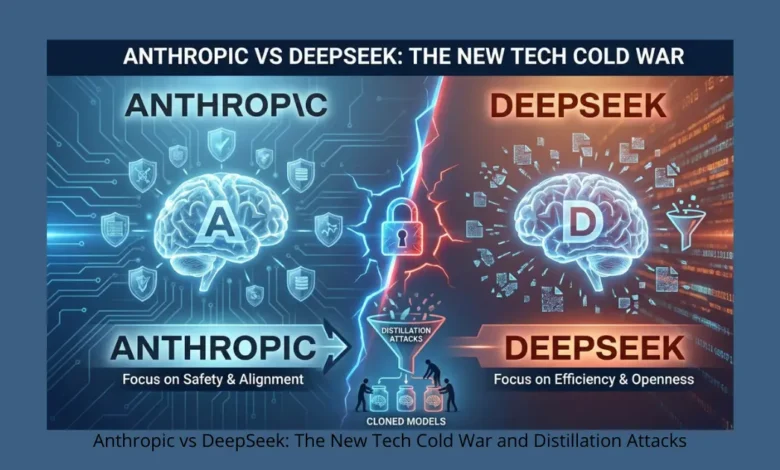

Anthropic vs DeepSeek: The New Tech Cold War and Distillation Attacks

Feenanoor – 02/03/2026 – Anthropic vs DeepSeek has become the defining headline of a new “Tech Cold War” in the artificial intelligence sector. Beyond the race for larger datasets and faster chips, a sophisticated conflict is brewing between these two giants. This confrontation isn’t just about market share; it’s about the intellectual property of “intelligence” itself. As accusations of reverse-engineering and data scraping fly, the industry is witnessing a shift in how AI models are built, protected, and benchmarked for the future.

The Core of Anthropic vs DeepSeek: Understanding Distillation Attacks

At the heart of the Anthropic vs DeepSeek dispute is what Anthropic describes as a “Distillation Attack.” This isn’t a traditional data breach; it’s a form of high-level reverse engineering. According to reports, thousands of bot accounts allegedly bombarded Claude (Anthropic’s flagship model) with millions of complex queries. By capturing Claude’s highly sophisticated reasoning patterns, these accounts used the outputs to train DeepSeek, effectively transferring the “intelligence” of a multi-billion dollar model into a cheaper, leaner one without the R&D costs.

Read Also:

AI Search vs Traditional Search — Why Generative Engines Threaten Google’s Dominance in 2025

Iron Lung Post Credit Scene: Why You Should Stay Until the Very End (Confirmed)

Technical Defense: AI Watermarking and Behavioral Monitoring

In response to these extraction attempts, Anthropic has deployed advanced security layers. Their systems detected abnormal activity from bot nets designed to extract logical “weights” from the model. To combat this, leading AI labs are now developing Digital Watermarking techniques hidden within model responses. These “invisible signatures” leave a trace if the text is later used to train a rival model, providing forensic proof of data distillation and IP theft.

Innovation Beyond the Giants: Sovereign AI and Green Energy

While the US and China clash over data, two new trends are reshaping the global AI innovation landscape:

- Sovereign AI Models: Many nations (including those in the Middle East) are moving away from dependency on American or Chinese giants. By training domestic “Sovereign Models,” countries aim to secure their national data without being caught in the crossfire of corporate wars.

- Green AI and Energy Efficiency: With Google recently announcing “Iron-Air” battery systems, the new frontier isn’t just “smarter” AI, but “greener” AI. Companies like Nvidia and AMD are redesigning their chips to focus on high performance with minimal energy consumption.

The Humanity Benchmark: The Ultimate Test of Originality

A new challenge has emerged for “distilled” models. A massive study involving 1,000 researchers has launched The Humanity Benchmark, a test specifically designed to find the weakness in models trained on other models’ outputs. Preliminary results suggest that while distilled models excel in mathematics and coding, they often fail in original creativity and philosophical intuition, highlighting a key gap in the “Pattern Mimicking” approach.

Frequently Asked Questions (FAQ)

What is the main conflict in Anthropic vs DeepSeek?

It centers on accusations that DeepSeek used Claude’s outputs to “distill” and clone its advanced reasoning capabilities.

What is an AI Distillation Attack?

It is a process where a smaller model is trained using the outputs of a larger model to mimic its logic at a fraction of the cost.

How does Anthropic protect its intellectual property?

Through behavioral monitoring and invisible digital watermarks embedded in the AI’s responses.

Why is Sovereign AI gaining popularity?

It allows nations to maintain data privacy and security independently of the US-China tech rivalry.

Discover more from Feenanoor

Subscribe to get the latest posts sent to your email.